Inhaltsübersicht

TL;DR:

- Content moderation is crucial for legal, financial, and community safety for adult creators.

- A consistent workflow includes consent verification, text/media review, metadata scrubbing, and post-publish monitoring.

- Hybrid moderation systems combining AI and human judgment offer the most effective approach for complex adult content.

Most adult creators assume their fans are the first line of defense when something goes wrong. The reality is sharper: platforms detect over 90% of violating content through internal AI systems, not user reports. That flips the moderation game entirely. If the platform is already watching that closely, your own moderation process needs to be just as sharp. This guide gives you a clear, practical system for moderating your content before it ever becomes a problem, protecting your income, your reputation, and your community.

Inhaltsübersicht

- Why content moderation matters for adult creators

- Step-by-step content moderation workflow

- Key tools and methods: AI, human, and hybrid moderation

- Dealing with gray areas and evolving trends

- A smarter moderation mindset: What creators miss

- Elevate your moderation game on Fanspicy

- Frequently asked questions

Wichtigste Erkenntnisse

| Point | Details |

|---|---|

| Moderation protects creators | A solid moderation process reduces bans, boosts trust, and protects your brand and earnings. |

| Hybrid tools are strongest | Combining AI and human checks resolves most issues and avoids costly mistakes. |

| Consent is step one | Always verify consent and documentation before posting any collaborative or sensitive content. |

| Adapt to evolving rules | Stay flexible and update your moderation practices with changing community standards. |

| Make moderation routine | Treat moderation as a daily habit for long-term safety and creative freedom. |

Why content moderation matters for adult creators

Content moderation is not a task reserved for platform engineers. As a creator, you are the first editor, the first reviewer, and the first line of accountability for everything that goes out under your name. The stakes are real and they are specific to this industry.

On the legal side, failing to moderate can lead to account bans, payment processor chargebacks, and in serious cases, lawsuits under laws like FOSTA-SESTA. Platforms have zero tolerance policies, and they act fast. One flagged post can trigger a cascade: frozen earnings, removed content, and a permanently damaged profile.

Financially, unmoderated content creates exposure that compounds over time. A single piece of content that violates community standards can trigger a wave of chargebacks from subscribers who dispute payments after a ban. Understanding the adult platform impact on creator earnings helps frame just how much is at stake with every upload.

The community angle is just as important. Fans who feel safe in your space stay longer, tip more, and refer others. Moderation signals professionalism. It tells your audience that you respect them and that you take your work seriously.

Here is what strong moderation protects you from:

- Account suspension or permanent bans

- Loss of payment processing access

- Legal liability from unconsented content

- Reputational damage that kills subscriber growth

- Platform-triggered content removal without warning

“Moderation is not just about removing bad content. It is about building an environment where your audience chooses to stay and spend.”

AI-driven and hybrid moderation systems have become essential because the scale and nuance of adult content make manual-only review impossible. But the smarter play for creators is to treat moderation as proactive strategy, not reactive cleanup. The creators who build sustainable business models for 2026 are the ones who bake moderation into their content workflow from day one.

Step-by-step content moderation workflow

A reliable moderation process does not have to be complicated. It just has to be consistent. Breaking it into defined steps removes the guesswork and turns moderation into a habit rather than a chore.

-

Consent check. Before anything else, confirm that every person appearing in your content has given written, documented permission. This is not optional. Verifiable consent documentation is the single most critical factor in avoiding legal and platform violations, especially for collaborative content.

-

Text review. Read every caption, title, and description before publishing. Avoid restricted keywords, phrases that suggest illegal themes, or any language that references minors, family dynamics in a sexual context, or non-consensual scenarios. Platforms scan for these triggers automatically.

-

Media scan. Review your images and video for anything that could be flagged: deepfakes, content involving individuals who appear underage, or abuse-adjacent material. Use platform-native tools or third-party AI scanners as a first pass, then apply your own judgment.

-

Metadata scrub. Photos and videos carry hidden data called EXIF metadata, which can include GPS coordinates, device identifiers, and timestamps. Strip this data before uploading to protect your identity and the identities of any collaborators. Free tools like ExifTool make this easy.

-

Rate-limiting your uploads. Avoid bulk uploading large volumes of content in a short window. Rapid-fire posting triggers spam detection systems on most platforms and can result in temporary freezes or content holds. Pace your releases.

-

Post-publish monitoring. Your job is not done after you hit publish. Check comments, DMs, and reports within the first 24 hours. Respond quickly to any flagged content or user complaints. Fast action signals good faith to platform moderators.

Pro Tip: Build a simple checklist in your notes app or a spreadsheet. Run through it for every single upload. Treating moderation like a pre-flight check makes it automatic, and it is far less stressful than recovering from a ban.

Pairing this workflow with solid content creation steps turns moderation into a seamless part of your production process. Smart content curation also reduces moderation load by ensuring you only publish what genuinely fits your brand and audience.

Key tools and methods: AI, human, and hybrid moderation

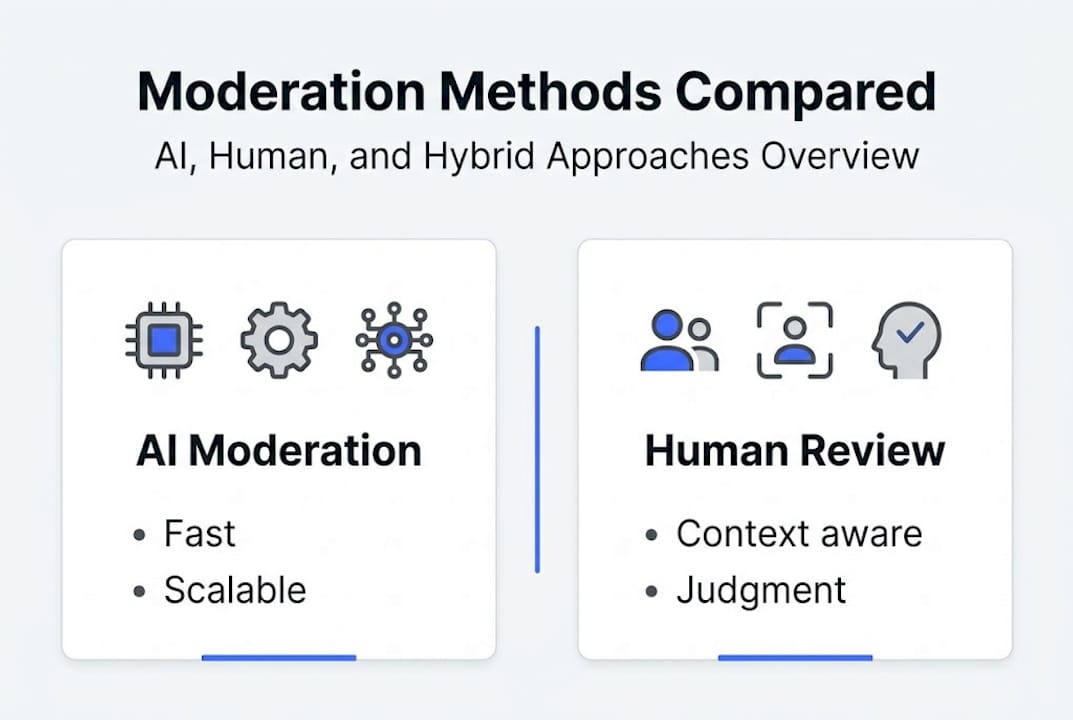

Not all moderation approaches are equal. Understanding what each one does well, and where it falls short, helps you build a smarter system as a solo creator.

AI moderation uses machine learning classifiers to scan content at high speed. In controlled lab environments, AI NSFW classifiers reach 95-97% accuracy. The problem is that labs are not the real world. In practice, these systems struggle with context, cultural nuance, and edge cases. They also carry documented bias: higher false positive rates for female and trans bodies mean legitimate content gets flagged more often than it should.

Human moderation brings contextual judgment that AI cannot replicate. A human reviewer understands tone, intent, and cultural context. The tradeoff is speed and sustainability. Human-only moderation does not scale, and reviewers experience high rates of burnout.

Hybrid moderation combines both. AI handles the volume and catches clear violations fast. Humans review borderline cases, apply context, and handle appeals. Hybrid systems achieve around 90% overall detection effectiveness in real-world conditions, making them the gold standard.

| Approach | Geschwindigkeit | Context accuracy | Scalability | Kosten | Bias risk |

|---|---|---|---|---|---|

| AI only | Very fast | Niedrig | Very high | Niedrig | Hoch |

| Human only | Slow | Very high | Niedrig | Hoch | Niedrig |

| Hybrid | Fast | Hoch | Hoch | Mittel | Mittel |

For solo creators, the practical application looks like this: use your platform’s built-in reporting and filtering tools as your AI layer, then apply your own manual review for anything flagged or borderline. Explore the range of content types in 2026 to understand which formats carry the highest moderation risk and prioritize your review time accordingly.

Dealing with gray areas and evolving trends

Most moderation problems do not involve obvious violations. They involve content that sits in an uncomfortable middle ground. Roleplay scenarios, parody content, emerging fetish categories, and satire all create situations where the right call is genuinely unclear.

The most effective approach here is case-based reasoning rather than rigid rule-following. Case-based frameworks adapt to evolving trends far better than static rulebooks because they ask: what has been decided in similar situations before? That question leads to smarter, more defensible decisions.

Here is a snapshot of how platform rules have shifted recently:

| Content type | Previous status | 2026 enforcement trend |

|---|---|---|

| Consensual roleplay with taboo themes | Often allowed | Stricter review, context required |

| AI-generated adult content | Largely unregulated | Increasing disclosure requirements |

| Deepfake-adjacent content | Inconsistently enforced | Near-universal bans |

| Parody of public figures | Gray area | Platform-dependent, rising scrutiny |

Staying current on these shifts takes active effort. Here is how to do it:

- Subscribe to official policy update channels for every platform you use

- Follow active creator communities on forums and Discord servers where policy changes get discussed in real time

- Review your content library quarterly against current rules, not just at upload time

- Build relationships with other creators to share flagging experiences and solutions

Understanding social media rules for adult creators is also critical, since cross-promotion on mainstream platforms adds another layer of moderation complexity. And when you are looking for content that clears these bars while staying fresh, drawing from proven creative content ideas keeps your output both engaging and defensible.

A smarter moderation mindset: What creators miss

Here is the take most articles skip: creators who treat moderation as a burden imposed by platforms are always one step behind. The ones who reframe moderation as an act of community leadership are in a completely different position.

When you moderate well, you are not just avoiding bans. You are telling your audience that their experience matters to you. That signal builds trust faster than any promotional post. Creators who approach moderation this way see measurably fewer disputes, lower chargeback rates, and stronger long-term fan retention.

The real shift is from reactive to intentional. Waiting for a flag means you are already losing. Building moderation into your weekly rhythm, the same way you schedule content or track earnings, means you are always ahead of the problem.

Pro Tip: Invite your most trusted subscribers to report anything that feels off. Turning moderation into a shared responsibility deepens their investment in your space and catches problems you might miss.

The biggest payoff is mental. Proactive moderation gives you peace of mind, and peace of mind is what lets you focus on creating. Explore how strong content curation insights support this mindset by helping you build a content catalog that is both compelling and clean.

Elevate your moderation game on Fanspicy

Ready to put these moderation steps into action? Fanspicy is built for creators who take their craft seriously. The platform combines creator-friendly moderation tools with a supportive community that understands adult content, so you spend less time managing problems and more time growing your audience.

See how top creators like JackiePott und Somlusolme build engaged, loyal communities while keeping their content clean and compliant. Fanspicy gives you the infrastructure to publish smarter, not harder. Fanspicy beitreten and start building a content space where safety and creativity work together from day one.

Frequently asked questions

What is the most common first step in content moderation for adult creators?

Verifying consent and documentation for everyone appearing in your content is always the first step. No upload should happen without confirmed, documented permission from all individuals involved.

How accurate is AI content moderation for adult content?

AI classifiers reach 95-97% accuracy in lab conditions, but real-world performance drops due to contextual bias and nuanced content that automated systems misread.

What are ‘gray area’ content moderation challenges?

Gray areas include roleplay, parody, emerging fetish categories, and borderline themes where platform rules are ambiguous. Case-based judgment consistently outperforms rigid rule-following in these situations.

How can creators stay updated on moderation rules?

Monitor official platform policy channels, participate in active creator communities, and review your content library quarterly to catch rule changes before they create problems.

Is human moderation still necessary with advanced AI tools?

Yes. Hybrid systems combining AI and human oversight achieve around 90% real-world effectiveness, making them far more reliable than AI alone for nuanced adult content review.